Until the 1950s, the data of CNC machine operation mainly came from punch cards, which were mainly produced through arduous manual processes. The turning point in the development of CNC is that when the card is replaced by computer control, it directly reflects the development of computer technology, as well as computer aided design (CAD) and computer aided manufacturing (CAM) programs. Processing has become one of the first applications of modern computer technology.

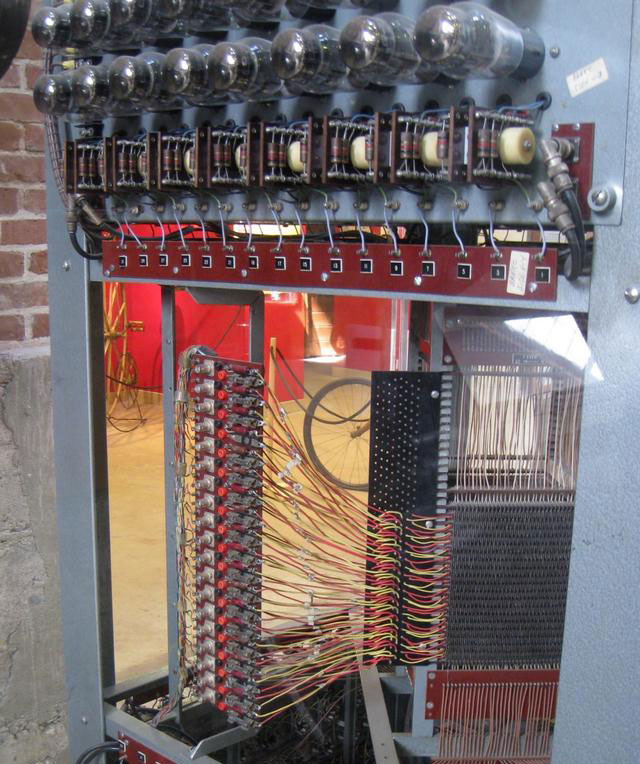

Although the analysis engine developed by Charles Babbage in the mid-1800s is considered to be the first computer in the modern sense, the Massachusetts Institute of Technology (MIT) real-time computer whirlwind I (also born in the servo machinery laboratory) is the world's first computer with parallel computing and magnetic core memory (as shown in the figure below). The team was able to use the machine to code the computer-controlled production of perforated tape. The original host used about 5000 vacuum tubes and weighed about 20000 pounds.

The slow progress of computer development during this period was part of the problem at that time. Besides, the people who try to sell this idea don't really know manufacturing - they're just computer experts. At that time, the concept of NC was so strange to manufacturers that the development of this technology was very slow at that time, so that the U.S. Army finally had to manufacture 120 NC machines and rent them to various manufacturers to begin to popularize their use.

Evolution schedule from NC to CNC

Mid 1950s: G code, the most widely used NC programming language, was born in the servo mechanism Laboratory of Massachusetts Institute of technology. G code is used to tell computerized machine tools how to make something. The command is sent to the machine controller, which then tells the motor the speed of movement and the path to follow.

1956: the air force proposed to create a general programming language for numerical control. The new MIT research department, led by Doug Ross and named Computer Applications Group, began to study the proposal and develop something later known as the programming language automatically programmed tool (APT).

1957: the aircraft industry association and a department of the air force cooperated with MIT to standardize the work of apt and created the first official CNC machine. Apt, created before the invention of the graphical interface and FORTRAN, uses text only to transfer geometry and tool paths to numerical control (NC) machines. (the later version was written in FORTRAN, and apt was finally released in the civil field.

1957: while working at General Electric, American computer scientist Patrick J. Hanratty developed and released an early commercial NC programming language called Pronto, which laid the foundation for future CAD programs and won him the informal title of "father of cad/cam".

"On March 11, 1958, a new era of manufacturing production was born. For the first time in the history of manufacturing, multiple electronically controlled large-scale production machines operated simultaneously as an integrated production line. These machines were almost unattended, and they could drill, drill, mill, and pass irrelevant parts between machines.

1959: MIT team held a press conference to show their newly developed CNC machine tools.

1959: the air force signed a one-year contract with the MIT electronic systems laboratory to develop the "computer aided design project". The resulting system automation engineering design (AED) was released to the public domain in 1965.

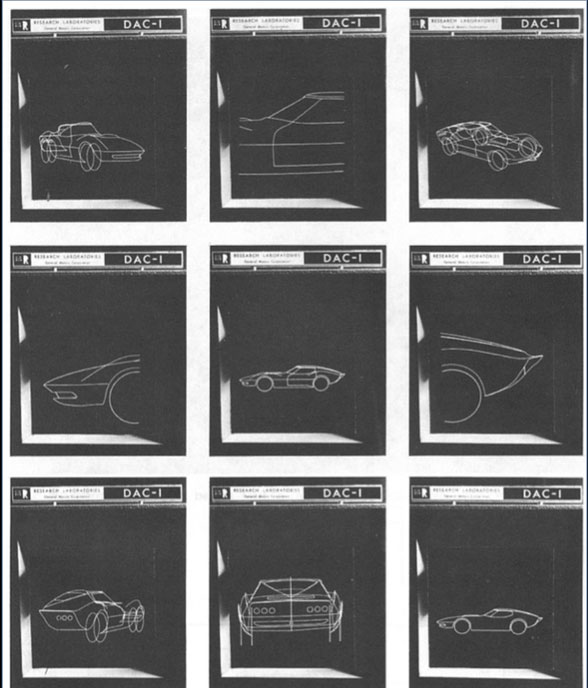

1959: General Motors (GM) began to study what was later called computer enhanced design (DAC-1), which was one of the earliest graphic CAD systems. The next year, they introduced IBM as a partner. Drawings can be scanned into the system, which digitizes them and can be modified. Then, other software can convert the lines into 3D shapes and output them to apt for sending to the milling machine. DAC-1 was put into production in 1963 and made public debut in 1964.

1962: the first commercial graphics CAD system electronic plotter (EDM) developed by itek, a US defense contractor, was launched. It was acquired by control data corporation, a mainframe and supercomputer company, and renamed digigraphy. It was initially used by Lockheed and other companies to manufacture the production parts of the C-5 Galaxy military transport aircraft, showing the first case of an end-to-end cad/cnc production system.

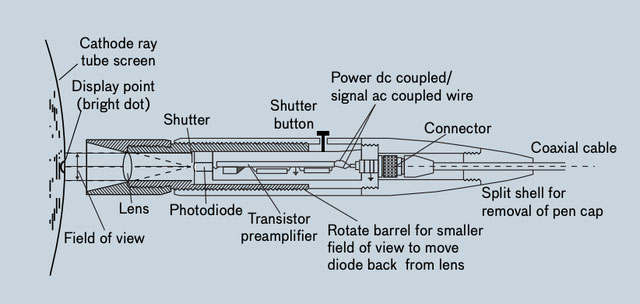

Time magazine at that time wrote an article on EDM in March, 1962, and pointed out that the operator's design entered a cheap computer through the console, which could solve problems and store the answers in digital form and microfilm in its memory library. Just press the button and draw a sketch with a light pen, and the engineer can enter the running dialogue with EDM, recall any of his early drawings to the screen within a millisecond, and change their lines and curves at will.

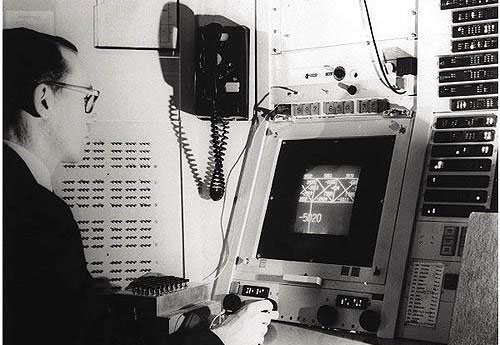

Ivan Sutherland is studying TX-2

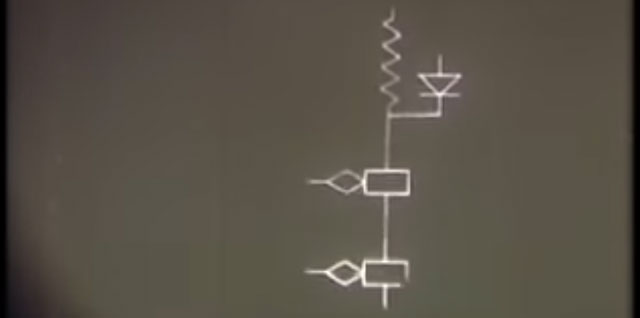

Schematic diagram of highlighter

At that time, mechanical and electrical designers needed a tool to speed up the arduous and time-consuming work they often did. To meet this need, Ivan E. Sutherland of the Department of electrical engineering at MIT created a system to make digital computers an active partner for designers.

CNC machine tools gain traction and popularity

In the mid-1960s, the emergence of affordable small computers changed the rules of the game in the industry. Thanks to new transistor and core memory technology, these powerful machines take up much less space than the room sized mainframes used so far.

Small computers, also known as mid-range computers at that time, naturally have more affordable price tags, freeing them from the restrictions of previous companies or armies, and handing over the potential of accuracy, reliability and repeatability to small companies, enterprises.

In contrast, microcomputers are 8-bit single user, simple machines running simple operating systems (such as MS-DOS), while subminiature computers are 16 bit or 32-bit. Groundbreaking companies include Dec, data general, and Hewlett Packard (HP) (now refers to its former small computers, such as the HP3000, as "servers").

In the early 1970s, slow economic growth and rising employment costs made CNC machining look like a good and cost-effective solution, and the demand for low-cost NC system machine tools increased. Although American researchers focus on high-end industries such as software and aerospace, Germany (joined by Japan in the 1980s) focuses on low-cost markets and surpasses the United States in machine sales. However, at this time, there are a series of American CAD companies and suppliers, including UGS Corp., computervision, applicon and IBM.

In the 1980s, with the decline of hardware cost based on microprocessors and the emergence of local area network (LAN), a computer network interconnected with others, the cost and accessibility of CNC machine tools also appeared. By the latter half of the 1980s, small computers and large computer terminals were replaced by networked workstations, file servers and personal computers (PCS), thus getting rid of the CNC machines of universities and companies that traditionally installed them (because they are the only expensive computers that can afford to accompany them).

In 1989, the National Institute of standards and technology under the U.S. Department of commerce created the enhanced machine controller project (EMC2, later renamed linuxcnc), which is an open-source gnu/linux software system that uses a general purpose computer to control CNC machines. Linuxcnc paves the way for the future of personal CNC machine tools, which are still pioneer applications in the field of computing.

Post time: Jul-19-2022